The position statement warns that AI systems trained on limited data sets could systematically underestimate risk in marginalized populations.

The Association for Diagnostics & Laboratory Medicine (ADLM) released a position statement calling on Congress and federal agencies to modernize laboratory regulations and implement policies to ensure AI clinical systems are safe and effective, particularly for historically marginalized patient populations.

The statement warns that AI models used in laboratory medicine could pose significant risks to patients if trained on limited, low-quality, or inconsistent data. AI health tools often rely on historical data sets that underrepresent certain racial and ethnic groups, age ranges, and socioeconomic groups, which can lead to systematic underestimation of risk or disease misclassification in these populations.

“Clinical laboratories are uniquely positioned to help develop and assess the integration of AI health tools into testing workflows and, most importantly, how they influence patient test results and health outcomes,” says ADLM president Dr Paul J Jannetto in a release. “We therefore urge the federal government to draw on the expertise of laboratory medicine professionals in order to develop AI regulations that support innovation, as well as transparent, consistent performance monitoring of this potentially revolutionary technology.”

Three Key Federal Recommendations

ADLM outlined three primary recommendations for federal action to address bias in laboratory AI systems:

Congress should work with federal agencies to update existing laboratory laws and regulations, including the Clinical Laboratory Improvement Amendments, to explicitly include AI systems within their scope.

Federal health agencies should partner with professional societies to convene laboratory medicine experts and informatics professionals to develop consensus guidelines for validating and verifying AI tools in laboratory medicine.

Federal agencies should expand and support initiatives to harmonize laboratory test results and standardize data reporting across the healthcare system.

The organization also called on AI developers to work with regulators and healthcare organizations to implement measures promoting data diversity and reducing bias in laboratory AI applications. Developers and vendors should ensure clinical laboratories have access to the data and technical resources needed to independently verify and validate algorithm performance.

AI’s Promise and Perils in Lab Medicine

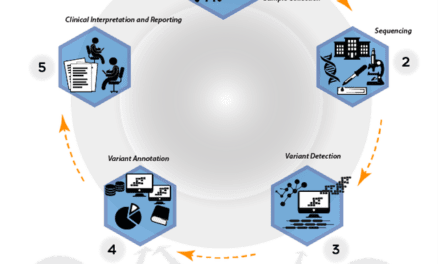

ADLM notes that laboratory medicine plays a critical role in patient diagnosis and care, with AI offering potential benefits including enhanced diagnostic accuracy, improved laboratory workflow efficiency, and more precise, data-driven clinical decision-making.

However, AI models are only as accurate as their training data, creating risks when systems learn from biased or incomplete information. The accuracy limitations can be particularly problematic for AI tools that impact test interpretation, diagnosis, and treatment decisions for vulnerable patient populations.

The position statement emphasizes that proper monitoring and validation of AI systems used in laboratory settings will be essential to realizing the technology’s benefits while minimizing potential harm to patients from underrepresented demographic groups.

ID 115899450 © Dzmitry Ryzhykau | Dreamstime.com