A highly criticized JAMA study raises public anxiety and professional frustration

Roundtable moderated by Steve Halasey

Since the middle of March, pathologists across the country have been expressing concern and frustration, after a study in the Journal of the American Medical Association (JAMA) seemed to suggest that nearly 25% of pathologists’ diagnostic interpretations of breast biopsy slides might be in error.1

The principal author of the study was Joann G. Elmore, MD, MPH, a professor of medicine at the University of Washington. For the study, Elmore and colleagues examined the extent of diagnostic agreement among pathologists when compared with a consensus panel reference diagnosis. The study included 115 pathologists who interpret breast biopsies in clinical practices in eight US states.

Taking all the cases together, the pathologists who participated in the study provided a total of 6,900 individual interpretations for comparison with the consensus-derived reference diagnoses. Overall, participants’ interpretations agreed with just 75% of the consensus panel diagnoses.

The concordance rate for cases of invasive breast cancer was 96%. The participants agreed with the consensus-derived reference diagnosis for 87% of cases involving benign lesions without atypia, and for 84% of cases involving ductal carcinoma in situ (DCIS). However, the concordance rate for cases of atypia was less than half—just 48%.

Pathologists were quick to discredit the Elmore study, pointing out that it employed procedures that do not reflect the real-world practice of anatomic pathology. But in the meantime, lay media outlets had picked up and sensationalized the implied findings of the study, raising public concern about the trustworthiness of diagnostic pathology reports of all kinds.

To find out more about this issue, CLP recently spoke with several experts who shared their views about the Elmore study, pathology practice standards, and the role of advanced technologies in improving pathology findings. Members of the panel were Kenneth J. Bloom, MD, chief medical officer at Clarient Diagnostic Services, Irvine, Calif; David J. Dabbs, MD, professor of pathology at the University of Pittsburgh Medical Center, and chief of pathology at Magee-Women’s Hospital; and Eric E. Walk, MD, chief medical officer for Roche Diagnostics, Tucson, Ariz.

CLP: This is not the first time that concern has arisen about concordance among pathologists. A previous round of concern led to publication of ASCO/CAP guidelines on HER2 testing in October 2013.2 How would you describe the level of concern over this issue before and since publication of those guidelines?

Kenneth J. Bloom, MD: The HER2 guidelines were more about standardization than concordance. They mandated validation and proficiency testing before reporting patient results. These mandates ensured everyone was using the same criteria and had basic competency.

David J. Dabbs, MD: Certainly, the ASCO/CAP guidelines were generated as a response to discordance between central and community HER2 testing. A lot of things have changed as a result of those guidelines, including specific analysis of preanalytic, analytic, and postanalytic factors.

But the issues of concordance addressed by the ASCO/CAP guidelines are quite different from the issues involved in furthering concordance in the morphologic diagnosis of breast disorders. In breast morphologic diagnosis, the issues of concordance that arise are similar to those that arise in many other areas of medicine, including mammography screening, interpretation of colposcopy findings, and variations in the treatment of hypertension. In every field of medicine, there are difficult “grey areas” where there can be significant variations in the interpretations of highly qualified professionals with expertise in the field. The goal is to minimize the effects of the uncertainty that exists in those grey areas.

Pathologists do not practice in a vacuum. In daily practice, no one hands them a single slide and asks, “What do you think this is?”

In the case of a core biopsy of the breast, for instance, pathologists are well aware of the practice patterns of their institutions, and they know precisely what steps will be followed if they call that biopsy atypical ductal hyperplasia, as opposed low-grade ductal carcinoma in situ. And because pathologists understand the ramifications of this call, the practices of their institution rightly influence how they call a specimen.

Most pathologists are rightly conservative about calling low-grade ductal carcinoma in situ on the basis of a core biopsy sample alone, because they may not have the patient’s clinical information or imaging studies. The specimen might have been taken for calcifications. In short, they really don’t have a good idea of how large the lesion could potentially be.

But by being conservative and calling the biopsy atypical ductal hyperplasia, the pathologist knows that the entire lesion will be excised, and the entire specimen can then be examined using multiple slides. That kind of evidence provides a much sounder basis for making a major diagnostic call that may be life-altering for the patient, ultimately determining whether they must undergo radiation therapy.

This is a very complex issue that is not cut and dried. But in no case can the real-world practice of medicine be represented by a study that starts off with “Here’s one slide, tell me what you think.”

Eric E. Walk, MD: Most people in the fields of pathology and oncology would agree that the ASCO/CAP HER2 guidelines have been successful in significantly reducing the level of concern regarding variability in the technical and interpretative aspects of HER2 testing that existed prior to their implementation. In my opinion the concerns associated with HER2 testing before issuance of the ASCO/CAP guidelines were more legitimate than the concerns raised by the Elmore study, both because of the flawed nature of the study itself, and because of the inaccurate and oversimplified conclusions drawn and promoted by the mass media.

CLP: How well does the recent JAMA study by Elmore et al. replicate current pathology practices for review of breast biopsies? What aspects of professional practice does it represent best?

Bloom: The Elmore article is a poor representation of the actual practice of pathology. It did a reasonable job of demonstrating that there is pathologist variability in interpreting borderline lesions when looking only at a single slide without the context of the patient and the sample.

Dabbs: The paper by Elmore is categorically not a valid study of inter-observer agreement among pathologists in the real world of breast pathology practice. Although it comes across as a research study, it does not actually reflect the real world of breast pathology practice. The authors themselves touch on this issue in the discussion section of their paper.

Instead, this is a fabricated study with severe limitations that only serves to raise anxiety among women and the public in general because of the design of the study.

First of all, the study was heavily weighted toward more difficult borderline lesions, for which many times there is no definitive diagnosis. In specimen slides representing such instances, a pathologist may view a continuous spectrum of morphologic changes, and may form an opinion based on experience; the context of the specimen type; the clinical situation of the patient, including age; and the practice patterns of the pathologist’s specific institution, which can vary widely even for the same diagnostic category.

Second, and of critical importance, the study authors intentionally chose to provide participants with just one slide to represent each case. According to the authors, this was done solely for the purpose of increasing participation among pathologists. But in the real world, pathologists always have more than one slide to look at. They have access to additional slides and additional levels, and they also have the ability to perform special processing, including immunohistochemistry, in order to arrive at a correct diagnosis. In my view, the authors’ decision represents a flaw in the design of the study that categorically disqualifies it from even remotely reflecting the practice of breast pathology in the United States—or anywhere else for that matter.

Third, the study gave no consideration to the fact that pathologists are subject to clear federal regulations, state regulations, and other quality assurance schemes that require them to seek appropriate consultation among colleagues when they encounter a lesion that is difficult to diagnose. Except for the three-member panel of expert breast pathologists assigned to be the authoritative voice of this study, none of the pathologist participants in the study were afforded the opportunity to practice the way they are required to do in the real world.

Finally, it’s of interest to note that this paper cites an earlier publication, “Understanding diagnostic variability in breast pathology: lessons learned from an expert consensus review panel,” for which JG Elmore was one of the senior authors.3 The authors of the earlier study proscribed a set of rules to enable pathologists to reach consensus on breast pathology diagnoses. But in the recent JAMA study, those rules were completely ignored.

Walk: The Elmore study does not represent current pathology practice at all, and rather creates an artificial interpretative framework specifically designed and biased to exaggerate inter-reader variability. The study was stacked heavily in favor of difficult, challenging cases such as atypical hyperplasia. The unusually difficult nature of these cases is apparent from the fact that even the three-member reference panel of expert breast pathologists achieved only 80% concordance with the final consensus diagnoses for atypia.

CLP: When deciding on the level of review needed to establish concordance among pathology experts, are the interests of patients, pathologists, and payors fully aligned? Can current practices address the concerns of all stakeholders?

Dabbs: There is probably significant variation among payors when it comes to whether they will cover a second opinion. Payors need to be aligned, because the shift in medicine nowadays is towards quality. If payors were fully engaged and actually practicing what they preach, they would definitely cover second-opinion biopsies.

At our institution, we consider it a best practice for our surgeons to review whatever biopsies a patient had done at another institution, before undergoing surgery at our institution. This practice is good for the patient, but also good for the pathologist, good for the surgeon, and good for the hospital. Because if a patient’s treatment goes south, all of those healthcare providers will wind up being named in litigation.

If we really want to maximize patient safety, then we also need to minimize risk. In this context, a second review should be performed because it’s the right thing to do. No one wants to perform surgery on a patient whose breast biopsy was called invasive cancer, only to find out that it was actually a papilloma. That would not be the right thing to do, and everyone would suffer as a result of that.

There’s no question that payors need to be aligned with the concept of second review—particularly for the subset of breast lesions that are notoriously difficult, and for which there will inevitably be a variation of opinion. With certain types and levels of atypia, variation of opinion expresses a legitimate spectrum of professional judgment, for which definitive surgery is usually the final arbiter.

Bloom: Payors and patients are aligned. They expect that the result is accurate. Pathologists understand that the result is an interpretation—their practice of medicine—which is not an absolute science. Most pathologists would get an intradepartmental consultation or a second opinion on atypical lesions or first diagnoses of malignancy.

CLP: The Elmore study suggests a need for mandatory second reviews of many breast biopsies, including especially those classified as atypia. Do you agree?

Dabbs: I see these as two separate issues. The paper itself does not offer sufficiently clear data from real-world practice settings to support that conclusion. Because the study data are flawed, the conclusion simply doesn’t follow from the data supplied in the study.

On the other hand, in the real world it’s always a good idea to seek out a second opinion whenever a biopsy suggests that further surgery is going to be needed. That should be true not only for breast biopsies, but also for prostate, liver, lung, or any other tissue biopsies. Many agree this is a practice that maximizes quality and patient safety, and minimizes patient risks, and should therefore be applauded.

Bloom: I believe that most pathologists show atypical breast lesions to a colleague and request a second opinion if they feel it might represent a malignancy. This should be standard practice.

Walk: I also believe in the general practice of second reviews, especially for diagnoses that significantly affect patient therapy. In the specific case of breast atypia, however, the Elmore study really doesn’t suggest a specific action; because of the absence of correlative outcomes data, we don’t know whether the issue is over- or under-diagnosis. This flaw, which is raised by the authors themselves in the discussion section, is another weakness that jeopardizes the validity of the study.

It’s an important point, because the purpose of “atypia” as a diagnosis in the first place is to assign some level of risk for progression to invasive breast cancer. In this particular study we don’t have the outcomes information needed to know which judgments about a particular sample were actually correct. Among the patients whose samples were called atypia by the expert panel versus those called atypia by members of the study panel, we don’t know which patients eventually developed invasive breast cancer.

It is well accepted in the literature that atypical hyperplasia is a risk factor for invasive cancer. Last year, in fact, the Mayo Clinic published the results of one of the largest longitudinal studies of women with atypical hyperplasia, which followed 698 women for a mean period of more than 12 years, and documented that 20% of these women did develop invasive cancer.4 But for the Elmore study, which lacks this kind of outcomes information, one must be very careful about interpreting the conclusions and the results.

CLP: The Elmore study found lower rates of concordance among pathologists from non-academic settings, pathologists who interpret lower weekly volumes of breast cases, and pathologists from smaller practices. Does this suggest a need within that population for greater training efforts, more consultation, greater use of technology—or what?

Bloom: It is not surprising that pathologists who lack subspecialty expertise and see significantly fewer breast samples than breast pathologists would have a higher discordance rate. Additional training is always helpful, but the simplest solution would be to show the case to a pathologist with subspecialty expertise. The upsurge in digital pathology solutions should make this a real possibility.

Dabbs: Experience counts for a lot. Pathologists who recognize their limitations almost invariably seek proper consultation. But again, the Elmore study was weighted heavily with atypia and difficult borderline lesions. If you’re showing a lot of those to people who don’t see very many of them—meaning pathologists from non-academic and smaller practices—the resulting lack of concordance can’t be considered much of a surprise.

Whether the question involves a breast biopsy or a prostate biopsy, pathologists who find themselves in over their heads and wanting to seek consultation should do so. At Magee-Women’s Hospital, we receive 30 breast specimens a day, on average, and approximately half of them are core biopsies and half of them are resection specimens. Whether they are benign, atypical, or malignant, all core biopsy specimens are submitted for a documented review by a second pathologist before sign-off.

We also have a daily conference where we review slides that are difficult or interesting for patient safety, and we get patient safety CME credits for attending. We have a culture in which our pathologists freely show cases to one another, and by doing so we have each learned to recognize how others will call certain things. As a result of such daily interactions, we have far greater agreement on cases than might otherwise be the case.

It’s not uncommon for pathologists to show cases to one another. In fact, JG Elmore has published on this very topic, in a study for which she queried pathologists about how they conduct consultations. But in this study, as we’ve noted, except for the three-member panel of expert pathologists, the study participants did not have an opportunity for consultation.

CLP: Eric, does that match your sense of performance in the pathology community, and especially among those who don’t see many cases?

Walk: Absolutely. It’s very clear that more-experienced, fellowship-trained breast pathologists deliver more accurate and reproducible diagnoses compared to those with less experience. This relates to another weakness of the Elmore study, which is that 95% of the pathologists participating in the study were not fellowship-trained in breast pathology, and 78% were not considered by their colleagues to be experts in breast pathology. This bias likely drove much of the observed “discordance,” and prevented the authors from achieving statistical significance in their examination of the role of breast pathology expertise on overall concordance. It would have been more informative—if the study had been adequately powered with trained breast pathologists—to properly stratify the study data according to the participants’ level of experience.

This real-world gap in expertise is an obvious area where technologies, in the form of molecular pathology techniques and molecular markers, could potentially help. But unfortunately, our understanding of the underlying molecular biology in this particular area of atypical hyperplasia is underdeveloped. Those of us in the field of molecular pathology and oncology would love to have immunohistochemistry or in situ hybridization markers to help pathologists of all experience levels reduce the subjectivity of their diagnoses in this area. But the science hasn’t caught up, and we don’t have those markers today.

What the field needs are immunohistochemistry or in situ hybridization markers to help pathologists of all experience levels reduce the subjectivity of their diagnoses in this area. The situation is analogous to squamous intraepithelial lesions of the lower anogenital tract, a series of morphologic diagnoses that has historically suffered from poor reproducibility. In 2012, the College of American Pathologists and the American Society for Colposcopy and Cervical Pathology published their lower anogenital squamous terminology (LAST) consensus recommendations, which include the use of the p16 biomarker in specific diagnostic situations involving intraepithelial neoplasia.5 The field needs similar biomarkers in the area of atypical breast lesions, but the science hasn’t caught up and we don’t have such markers today.

CLP: Media interpretations tend to over-simplify the Elmore study findings, and many feel they undermine public confidence in pathology reports. How can the media and public better understand the findings?

Dabbs: The media are a problem here. Most media representatives do not know how to read scientific papers, and in this case they have created a lot of havoc by raising unnecessary questions based on a flawed study, and raising significant anxiety among all sorts of people from all walks of life—most importantly among women who have only heard the media sound bites.

It is ironic that Elmore is responsible for creating such an inflammatory paper. Her previous papers have looked at quality issues and variation in the interpretation of mammograms, coronary vasculature angiograms, and a host of other things. And in those other papers, she always mentions the anxiety that women experience over atypia, abnormal mammograms, and so on. And yet, her latest study design has resulted in a paper that is utterly anxiety provoking to the max.

I don’t know of a good way to deliver papers like this to the media. The media are out to generate excitement so that people will buy newspapers or pay attention to their reports, and I don’t know if there’s a better way to introduce scientific findings into such an environment. Nancy Davidson, MD, and David Rimm, MD, PhD, wrote a nice and appropriate editorial on this topic that appeared in the same JAMA issue as the Elmore study, but apparently it wasn’t enough to allay the fears that were inappropriately generated by the study itself.6

Bloom: The findings strongly suggest that pathologists have excellent concordance for lesions that they call invasive or benign. In the real world, cases of atypia and ductal carcinoma in situ, as well as invasive breast cancer, are routinely shown to a second pathologist. The public should feel confident that potentially controversial cases have a second set of eyes reviewing the interpretation. I think where we should be moving is that a subspecialty pathologist review all atypical and malignant diagnoses. That goes for breast, heme, lung, colon, and so on. This could be possible if we enable digital pathology networks.

Walk: Unfortunately, the mass media drew oversimplified and incorrect conclusions from the Elmore study, focusing in on the area of atypia without fully understanding the study design and its conclusions. My concern is that the public’s perception in the wake of the Elmore study is that patients cannot trust reports coming from pathologists in general, which I think would be a horrible tragedy.

At baseline, the field of pathology has a public relations issue, because patients don’t really know or understand the role of the pathologist. So on the one hand, it’s good that patients hear media coverage like this so they can begin to understand the role of the pathologist. But unfortunately, with the Elmore study coverage they’re getting the wrong impression about the ability of pathologists to deliver accurate, treatment-guiding information—even though there’s no evidence to suggest that pathology diagnoses in general are causing patients harm.

Even within the Elmore study—again, with the caveat that we don’t have corresponding outcomes data for any of the diagnoses—it’s worthwhile looking again at the concordance rates. For invasive cancer, for benign breast disease without atypia, and for ductal carcinoma in situ, the rates of concordance among the study participants were all very high—96%, 87%, and 84%, respectively.

Like David, I don’t know a better way to prevent the media from misrepresenting studies such as this one. Perhaps it will always fall to experts in the field to respond and try to correct media misinterpretations.

CLP: How can technology improve concordance? How do molecular pathology, digital pathology, and whole-slide imaging contribute to better performance?

Bloom: Technology is critical to improving performance. When specimens appear similar but behave differently, we need to identify what distinguishes those lesions. That may require technologies that are slide-based, like immunohistochemistry or fluorescence in situ hybridization, or molecularly based, like polymerase chain reaction or next-generation sequencing.

In the future the technologies may also be image-based, using technologies like machine learning. Things that look identical to us, may look different to a machine. Whatever the mechanism, more data generally means better results.

Dabbs: At UPMC, digital pathology is a big issue. Although we’re not using it for primary diagnosis yet, since a lot of the digital pathology platforms are still awaiting FDA clearance for that purpose, we are using it for internal consultations, for some external consultations, and for teaching. For the moment, the platforms offer excellent tools for teaching, including libraries of difficult lesions that we can make available to the trainees in our department. This is a step in the right direction toward the eventual use of whole-slide imaging.

What’s critically important in this area is the molecular morphology of the specimens under examination. We’ve moved way beyond the age of the grind and bind techniques that we used to use to examine hormone receptors. Digital pathology permits pathologists to actually have eyes on the lesions they are calling atypia, and to use probes for messenger RNA, microRNA, or other molecular features on the slide, in order to document the molecular abnormalities in these lesions.

My caution is aimed at what’s currently going on with invasive cancer and gene expression profiling. For example, there are currently several different gene expression platforms available, but, believe it or not, the variance among the systems’ grading of risk assessment tumor specimens is far greater than the variance in pathologists’ grading of those very same tumors. It’s really frightful what’s being created out of those proprietary black boxes.

What I’m cautiously hoping we’ll achieve is getting the right molecular tools, putting eyes on them so that we know we’re looking at a lesion, and making available an unequivocal interpretation for that type of lesion that is consistent among pathologists and over time. But right now, in the case of gene expression profiling for breast cancer, we don’t have that.

Instead, what we have is a mishmash of different platforms giving us different risk assessments for the very same tissue block on the very same patient. We ended up here, in part, because these systems were created without pathologist input. So now we’re looking at significant diagnostic variations, and our colleagues who once thought that the black boxes were objective don’t think that anymore.

CLP: Eric, Roche is very active in this field, and all of these technologies are things that Roche is working on. What is your thinking about the current status of digital pathology?

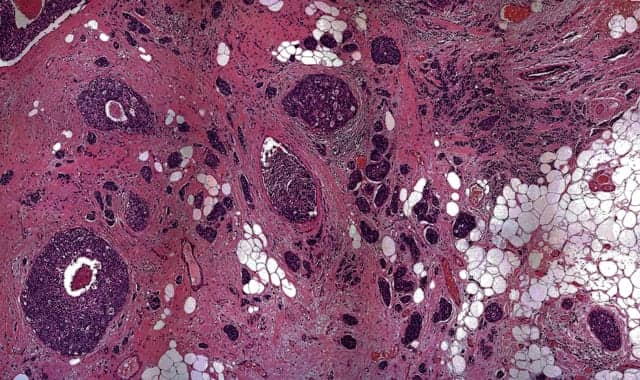

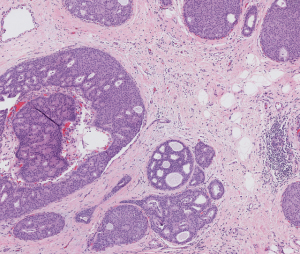

Ductal carcinoma in situ of the breast, hematoxylin and eosin stain. Photo courtesy Roche Diagnostics.

Walk: Molecular pathology has and will continue to have a transformative effect on how patients are diagnosed and treated. In the case of breast atypia for example, we actually don’t care very much about the morphologic “label” of atypia itself, but we do care about identifying patients who are at high risk of progressing to invasive breast cancer.

Ideally, we would like to have highly specific and highly sensitive markers that enable us to determine with certainty which individual patients with various premalignant changes will go on to develop breast cancer, and which ones will never develop breast cancer. We don’t have these kinds of markers right now, but certainly Roche Diagnostics is interested in markers to address this unmet medical need, and it’s fair to say that this is a key goal for the entire field of breast pathology. At present, we don’t understand whether atypical breast lesions are non-obligate precursors, or whether they are definite risk indicators for invasive cancer.

Megan Troxell, MD, PhD, an associate professor of pathology at Oregon Health & Science University, recently published one of the few studies looking at precursor breast lesions, including atypia, and found a high rate of mutations in a genetic pathway called PIK3CA, or PI3 kinase.7 Interestingly, in paired samples where she had the precursor lesion for patients who later developed cancer, oftentimes the mutations were present in the precursor atypia, but not present in the cancer. This finding suggests that the mutations may have something to do with breast proliferation—but perhaps not with the transformation to cancer. Another marker that has been studied in this context involves deletions of chromosome 16q, which can also be observed in breast atypia.

This is precisely the kind of research we need more of. We really need to understand the genes and proteins associated with the transformation process between atypia and cancer. But unfortunately, we don’t have the full molecular picture to help us get to that ideal goal of predicting, at the individual patient level, which patient with atypia will go on to develop cancer and which can be safely followed with very little risk of developing cancer. That’s the overall goal, and certainly one that Roche Diagnostics is interested in and following quite closely.

Regarding digital pathology, I agree with David that there is great potential for digital pathology to help pathologists be more objective in difficult areas of interpretation. Roche’s current focus is on digital algorithms using whole-slide imaging technology for immunohistochemistry markers. For example, we have FDA 510(k) clearance for the entire panel of breast cancer markers, including ER, PR, HER2, Ki-67, and p53.

But atypical hyperplasia in breast cancer is currently identified via morphologic diagnosis based on visual assessment of tissues stained with hematoxylin and eosin (H&E). So before the use of digital pathology can really take off, FDA will have to grant approval for a digital pathology scanner to be used for so-called “primary digital diagnosis.” Once that happens, the entire field can then work on the challenges of machine learning algorithms and software for image analysis, which in the long run hold the potential to standardize the reading and interpretation of difficult H&E morphologic diagnoses such as atypia of the breast. That is a long-term goal of Roche Diagnostics—but an important first step must be FDA approval of primary digital diagnosis.

CLP: Can mammography and clinical outcomes data also inform diagnoses and treatment selection?

Bloom: Context is everything in medicine, so yes, clinical and radiographic information is critical in the real world and simplifies many real-world problems. For example, distinguishing low-grade ductal carcinoma in situ from atypical intraductal epithelial hyperplasia from a core biopsy can be quite challenging. But if the next step in both cases is the same surgical procedure, then the distinction is mostly academic.

Dabbs: In the real world of practice, pathologists usually get a snippet of information regarding imaging findings and how the biopsy was performed. If the clinical history says “biopsy for suspicious calcification,” for instance, then the procedure usually performed would be stereotactic core biopsy; if the biopsy is to assess a mass, the procedure may be a core biopsy or maybe an ultrasound guided biopsy, and so on. So pathologists generally have some idea of the extent or measure of the lesion based on that rudimentary information.

At our institution, pathologists have desktop access to the radiographic imaging systems that the radiologists use. So they can just punch in medical record numbers to pull up an image, and they can look at it themselves, together with the radiologists’ interpretation.

Either way, that information is important and should be taken into account when determining what kind of lesion is being reviewed. The vast majority of calcification biopsies are benign, so it’s important for the pathologist to have that information. Without it, it can be hazardous for the pathologist to interpret biopsies—especially if they are in the grey zone of atypia and other difficult lesions.

CLP: Is there a need for additional research to refine the Elmore study findings? What study features would improve the accuracy of the findings and help to better define areas in need of revised practices?

[reference id=”41944″]Additional Reading[/reference]

Bloom: I am not sure the Elmore study needs to be refined further. It highlights that there is variability in interpretation of a pathology slide, just like there is variability in interpreting any complex dataset.

We are in an era when we should be able to integrate more and better information in order to improve our diagnostic accuracy. In the coming years, it will be essential that we figure out how to incorporate machine learning and molecular techniques (both in vivo and in vitro), and to obtain additional molecular information from the lesion as well as the germline of the patient. Pathology interpretation is critical, but it is only one piece of a large puzzle.

Dabbs: Elmore’s recent JAMA paper cites an earlier paper, “Understanding diagnostic variability in breast pathology: lessons learned from an expert consensus review panel,” for which Elmore was the senior author.3 In that paper, the authors provide a very specific list of recommendations for accomplishing just what you’ve described—improving the accuracy of findings and helping to better define areas in need of revised practices. In their conclusion, the authors write: “our observations provide an improved understanding of the challenges involved in achieving and accurately and appropriately reporting levels of diagnostic agreement in pathology. Our findings provide useful insight for improving quality assurance in both research and clinical settings. This study could serve as a framework for the evaluation of observer variability and future studies addressing this issue in both breast pathology and other areas of pathology.”

Considering that many of the authors of this paper are also authors on the recent JAMA paper, it’s astonishing that the JAMA study didn’t make use of the very framework defined in the earlier paper. It certainly leads one to wonder and speculate about what agenda may have been behind the JAMA paper.

So the short answer to your question is yes, there are published papers that could help to better define areas in need of revised practices, and Elmore is one of the authors of such a paper.

CLP: Do you think the Elmore study and others that may follow will result in the issuance of additional guidelines? What should be the focus of such efforts?

Dabbs: I doubt that the Elmore study will result in the issuance of additional guidelines. Some of the authors on the paper are unhappy with the way it was written, and they’re unlikely to champion any further extension of the work. That’s not to say that there’s no room for improvement by way of additional guidelines. But I don’t think this is going to be the sentinel paper that sets pathologists on a new course for the future.

Walk: I agree. Because of the limitations of this study, I don’t see its findings leading to guidelines in this area.

Bloom: I think guidance similar to the ASCO/CAP HER2 guidelines would be helpful, reminding pathologists of the need for ongoing proficiency testing, review of diagnostic criteria, and so on.

I think that we should be leveraging access to subspecialty expertise through digital pathology. First we would need studies showing that diagnoses of atypia and malignancy made in the community setting would benefit from subspecialty review on a digital network. Such a study could be accomplished quite easily, for example, by obtaining a few hundred cases diagnosed as atypia in the community, scanning all slides digitally, and having a panel of expert pathologists review them.

In this way, we could determine whether there is such a thing as concordance among experts, and whether community pathologists would benefit by having access to such a network.

Steve Halasey is chief editor of CLP. He can be reached via e-mail at [email protected].

REFERENCES

- Elmore JG, Longton GM, Carney PA, Geller BM, et al. Diagnostic concordance among pathologists interpreting breast biopsy specimens. JAMA. 2015;313(11):1122–1132; doi: 10.1001/jama.2015.1405.

- Wolff AC, Hammond MEH, Hicks DG, et al. Recommendations for human epidermal growth factor receptor 2 testing in breast cancer: American Society of Clinical Oncology/College of American Pathologists clinical practice guideline update. Arch Pathol Lab Med. 2014;138:241–256; doi: 10.5858/arpa.2013-0953-SA.

- Allison KH, Reisch LM, Carney PA, Wever DL, et al. Understanding diagnostic variability in breast pathology: lessons learned from an expert consensus review panel. Histopathology. 2014;65:240–251; doi: 10.1111/his.12387.

- Hartmann LC, Degnim AC, Santen RJ, et al. Atypical hyperplasia of the breast: risk assessment and management options. N Engl J Med. 2015;372:78–89; doi: 10.1056/NEJMsr1407164.

- Darragh TM, Colgan TJ, Cox JT, et al. The lower anogenital squamous terminology standardization project for HPV-associated lesions: background and consensus recommendations from the College of American Pathologists and the American Society for Colposcopy and Cervical Pathology. J Lower Genital Tract Disease. 2012;16(3):205–242: doi: 10.1097/LGT.0b013e31825c31dd.

- Davidson NE, Rimm DL. Expertise vs evidence in assessment of breast biopsies: an atypical science. JAMA. 2015;313(11):1109–1110; doi: 10.1001/jama.2015.1945.

- Troxell ML, Brunner AL, Neff T, et al. Phosphatidylinositol-3-kinase pathway mutations are common in breast columnar cell lesions. Mod Pathol. 2012;25(7):930–937; doi: 10.1038/modpathol.2012.55.