Sophisticated diagnostic equipment is designed to improve quality, increase throughput, and manage continued labor shortages. All diagnostic manufacturers can provide the end user with software specifications that simplify automatic sampling and predilution; ensure workflow efficiency with high-speed throughput and performance; provide accuracy in testing with precision optical systems; deliver real-time access and alerts for patient and QC packages; and offer mechanical alerts to any possible instrumentation failures.

Additionally, troubleshooting instrumentation, even with the above solution, creates unique challenges for medical technologists. Instrumentation can alert, flag, and warn that something is problematic, but it takes an experienced medical technologist to determine the root cause. Reviewing shifts and trends, reagent lot changes, calibration drifts, patient samples, repeats, and intermittent mechanical errors come into play when resolving assay issues. Replacing reagents, redoing calibration, and testing new quality-control material can be time consuming, costly, and nonproductive activities that must be performed and documented.

Identifying a Problem Source

Consider for a moment when your instrument gives an alert on a critical assay:

- Quality control has been flagged; a Westgard Rule for trending has been exceeded.

- Over the last several runs you notice that a larger number of patients are trending toward the high/low end of the assay.

- An increased number of patients exceeds the normal range.

- Other diagnostic tests do not correlate with abnormal results.

- Calibration appears to be drifting.

-

- Figure 1.

Millipore’s BioPak is an efficient ultrafiltration method for removing bacteria by-products.

The first steps include reviewing the calibration and quality-control parameters for changes. Reagent lots are changed, recalibration is performed, and the patient samples are repeated but still yield the same results. Subsequent calls to technical service and review of the instrument’s mechanical functions do not correct the problem. After all corrective actions appear to fail, a visit by an onsite service engineer is required. Decontaminating the instrument often is suggested by the diagnostic company. This is a lengthy process that takes your instrument out of production when only one assay has failed.

In the end, cleaning the instrument corrects the problem. Having evaluated the obvious issues, the technologist is puzzled. Why does decontaminating the instrument often solve the problem?

Water in Clinical Assays

-

- Figure 2.

Used for directly feeding clinical analyzers, Millipore’s Elix Clinical with BioPak ensures water quality.

One parameter, even though utilized by each end user on any kind of diagnostic instrument, has not been considered: water. Used in a variety of assays, water is a major reagent in clinical chemistry and immunoassay testing. Analytical factors linked to water quality need to be controlled and optimized to reduce the number of test failures. Drifting calibrations, high blanks, and patient values trending toward the high/low end of the assay can stem from poor water quality, which then contributes to erroneous patient results.

Certain assays are very sensitive to water quality, particularly immunoassays, where the antigen-antibody detection is affected. Water quality should be monitored closely, along with other variables, as the subtle changes in water quality over time can lead to serious analyte failures due to bacterial contamination. If not scrutinized, the result is a biofilm buildup in the water system and, consequently, in the tubing, water bottles and manifolds of the chemistry analyzer.

Unscheduled decontaminations by high-purity water providers and diagnostic companies are costly. Therefore, maintaining the purification system and monitoring water quality parameters are critical in a clinical laboratory. The maintenance ensures optimum performance of analyzer and assay results. Monitoring the water quality is key for establishing preventive maintenance and reducing costs due to downtime caused by decontamination.

New assay development is a key selling feature of all instrumentation; as the research progresses, smaller sample volume and reaction vessels are subjected to harsher environments in which to react. General chemistry, enzymatic assays, immunoassays, TDMs, and nucleic acid-binding assays easily can become contaminated with bacteria and ions that previously were not a problem. Bacteria release enzymes, and ions mimic behaviors of enzymes targeted or used in the assay methods. Some ions are used as cofactors, while others act as inhibitors.

Often, little consideration is allotted to water quality, although it is an integral part of the instrument and testing method. When purchasing new laboratory instrumentation, few laboratory managers consider the quality of water required or its impact on the testing methods until a purchase is completed. It is simply assumed that current laboratory water systems will meet these needs.

Purifying Water to Meet Requirements

Millipore Corp has experience in the field of high-purity water production, and has worked closely with pharmaceutical and diagnostic manufacturers. Based on discussions and investigations with diagnostic manufacturers regarding water quality and how contaminated water affected their most sensitive assays, research was developed based on the assumption that bacterial Alkaline Phosphatase (ALP) might be the root cause of some issues.

Most manufacturers of water systems use a 0.22-µm filter as part of the final filtration process before water enters the analyzer. These filters will remove bacteria and particles larger than 0.22-µm. However, research has demonstrated that as bacteria die and decay behind the 0.22-µm filter, bacterial ALP is released from the cell and washed downstream. The use of ALP as a detection enzyme is common in numerous biomedical methods, including enzyme immunoassays and ALP-labeled nucleic acid probes. Most assays of this type are performed using calf intestine ALP (CIP). Bacterial ALP released following the proliferation of a bacterial species in pure water can create interferences with the CIP in enzyme immunoassays.

Ultrafiltration methods can be used efficiently to remove bacteria by-products (bacterial alkaline phosphatase, endotoxins) from the water. (Figure 1). Research conducted comparing the effectiveness of a 13kDa ultrafiltration device with a 0.22-µm filtration unit demonstrated that ultrafiltration provides ALP-free water. A microbiological filter is only marginally efficient at removing ALP, since some ALP is retained on the filter by adsorption without filtration. Using smaller pore sizes can provide a solution for ALP removal.1

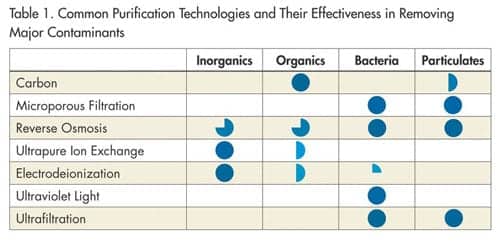

In the manufacturing of high-purity water systems, Millipore uses a combination of purification technologies (Table 1) designed specifically for the clinical laboratory. The purification system reduces contaminant levels and ensures that the water fed to the clinical analyzer is of consistent quality. Elix® Clinical systems combines different technologies to build a complete water-purification system for the clinical laboratory setting.

Typical purification technologies include general filtration to reduce the incoming particle load, and reverse osmosis (RO) to decrease the load of ions, organics, colloids, and particulate. However, RO water is susceptible to the daily and seasonal variations in tap water quality. To eliminate variations and ensure more constant quality, electrodeionization is now included in the purification process. This technology uses selective anion and cation semipermeable membranes as well as ion exchange resins that are regenerated with a small electrical current. To reach clinical laboratory reagent water standards, additional purification requires ion exchange resins, which remove ions down to a very low level.

CLSI guideline recommendations

In recognition of the impact that water quality has on patient results, the Clinical Laboratory Standards Institute (CLSI) published the latest guidelines in July 2006 entitled, ”Preparation and testing of reagent water in the clinical laboratory” (C3-A4 Vol. 26 No. 22). The new CLSI guidelines were written to cover the use of a standard suitable level of water purity. This ensures the clinical chemistry testing method is not affected by impurities found in the source water once processed via a high purity water system.

Type I, II and III nomenclature has been replaced with purity types that provide more meaningful parameters in the clinical laboratory. Clinical Laboratory Reagent Water replaces Type l and ll water for most applications. Instrument Feed Water, a new designation, will allow instrument manufacturers to clarify specifications for their particular methods. Autoclave and wash water meets the requirements of previously classified Type lll quality. These new definitions include parameters not previously specified. Special Reagent Water also may be specified when additional parameters are needed to ensure that water quality is suitable for specific applications (e.g. DNA-based assays, mass spectrometry-based assays). A complete review of this CLSI document should be done when considering new applications (note: reference the original document for further information) to ensure contaminants found in the source water do not become an issue.

— JL and SM

The most crucial stages consist of bacterial removal. This is accomplished by a variety of means including screen membrane filtration (0.22 µm), germicidal UV 254 nm treatment and chemical sanitization. Typically, a 0.22-µm membrane filter at the inlet to the analyzer controls the release of bacteria from the water-purification system. As a result of Millipore’s research, ultrafiltration has been proposed as a method of eliminating bacterial by-product (ALP, endotoxins) from the final product water. This ensures the highest quality water for immunoassay and general chemistry applications.

The combination of Elix Clinical and Biopak™ (Figure 2) offers optimum performance of assays impacted by water quality. Consistent performance of quality control and patient results can be obtained with these technologies to meet the new Clinical and Laboratory Standards Institute guidelines. Delivering improved total laboratory quality, while dealing with cost constraints and labor shortages, is Millipore’s commitment to the clinical market.

Conclusion

In summary, all parameters, from feed water to high purity water production, need to be monitored on a regular basis. The water quality delivered to the analyzer is as important as any other reagent. Control of bacteria and its by-products with Elix technology and Biopak filters provides the highest quality water to be used in assays sensitive to these contaminants. Control of the water quality eliminates frequent decontamination. This optimizes analyzer performance and reduces downtime that can be costly to the customer and analyzer manufacturer.

Johanne Long is the clinical product marketing manager, and Stéphane Mabic is the worldwide applications support manager, Bioscience Division, Millipore Corp. They can be contacted at 33 1-30-12-71-40; ; www.millipore.com.

Reference

- Bôle, J, Mabic S. Utilizating ultrafiltration to remove alkaline phosphatase from clinical analyzer water. Clin Chem Lab Med. 2006; 44(5):603-608.