Proscia, Philadelphia, a leading provider of artificial intelligence (AI) -enabled digital pathology software, has released the findings of a new study on the first deep learning system with proven accuracy in real laboratory environments.1 The study is the largest AI validation study conducted in pathology to date and supports the growing impact of AI in cancer diagnosis. The paper’s results serve as the foundation for enabling faster and more accurate diagnosis to improve treatment decisions and patient outcomes.

The antiquated standard of care for diagnosing cancer relies on the pathologist’s assessment of patterns in tissue as viewed under a microscope. This manual and subjective practice cannot keep pace with the growing demand for diagnostic services amid a rapidly declining pathologist population and can lead to a lack of confidence in treatment decisions.

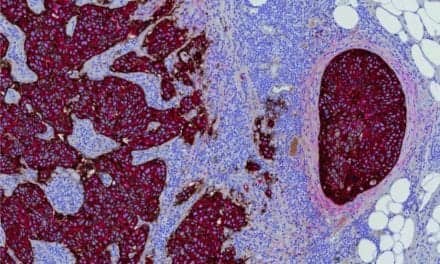

Proscia’s study describes a deep learning system that achieves 98% accuracy in classifying whole-slide images of skin biopsies in real laboratory settings. To achieve this consistent performance, the system was designed using high-quality and diverse deidentified data. It was developed using thousands of images from Dermatopathology Laboratory of Central States, one of the largest dermatopathology laboratories in the United States, and tested on an uncurated set of 13,537 images from Cockerell Dermatopathology, Thomas Jefferson University Hospital, and University of Florida to account for the wide variety of diseases seen in practice. This volume of test data, along with the different methods of biopsy, preparation of tissue, tissue staining procedures, and digital scanning processes used across the test laboratories, indicate that AI is generalizable across multiple laboratory settings.

For additional information, visit Proscia.

Reference

- Ianni JD, Soans RE, Sankarapandian S, et al. Tailored for real-world: a whole-slide image classification system validated on uncurated multisite data emulating the prospective pathology workload. Sci Rep. 2020;10:3217; doc: 10.1038/s41598-020-59985-2.